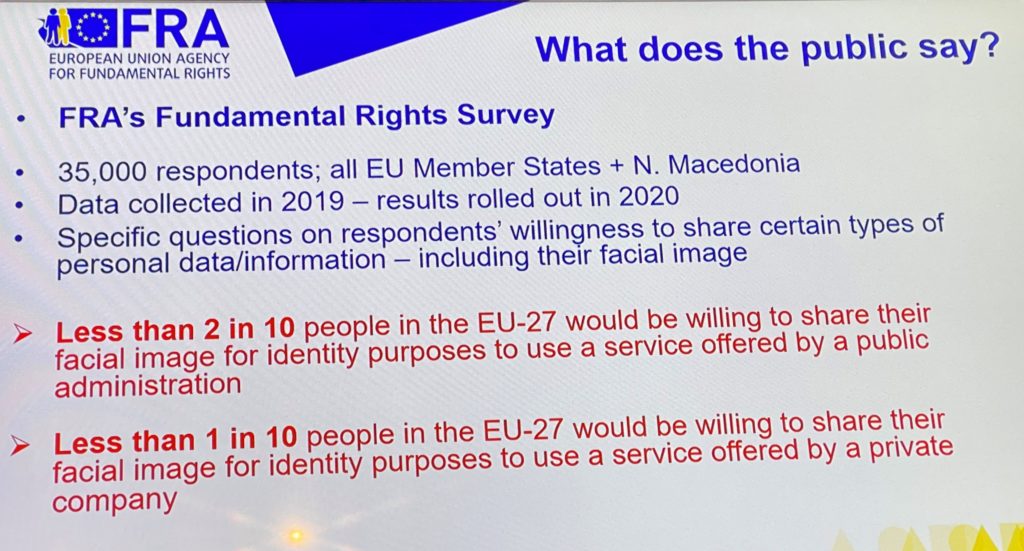

Less than 2 in 10 Europeans want to share their biometric data with public authorities found a survey by the European Union Agency for Fundamental Rights (FRA) , the early results of which were released on March 3, 2020 during a panel on facial recognition in policing organized at the Microsoft Data Science & Law Forum.

The Fundamental Rights Survey (FRS) aims to provide a comprehensive set of comparable data concerning people’s experiences and opinions concerning the way their fundamental rights are protected. It focuses in areas ranging from data protection to equal treatment, access to justice, consumer rights, crime victimisation, etc.

The FRS undertook an important survey on data protection in Europe with more than 35000 Europeans from all EU Member States responding. The data were collected in 2019 and should start being released in May 2020 at the occasion of the second anniversary and assessment of the General Data Protection Regulation (GDPR).

During the Brussels facial recognition panel Joanna Goodey, Head of Research and Data Unit at the FRA, released some first data of the survey concerning responses to questions related to the sharing of biometric data.

According to the slide presented by Joanna Goodey (figure 1), Europeans are very reluctant to share their biometric data with public authorities, and even more so to share them with a private company.

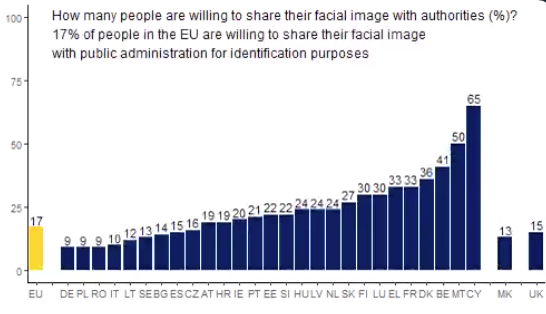

Following this slide and a tweet by the AI-Regulation team present in Brussels, FRA tweeted some more details about the result of the survey on this point. Here is the table released by FRA:

These findings underline a global reluctance of EU citizens to share their biometric data. According to the survey, only 17% of people in the EU are willing to share their facial image with public administration for identification purposes.

If citizens in some countries seem more inclined to share these sensitive data (such as Cyprus, with 65% of the respondents being in favor, or Malta with 50%), citizens in other countries are much more reluctant. Not surprisingly so, German citizens are extremely reluctant to share their data with public administration for identification purposes: only 9% of the respondents were willing to do so. Same situation in some former communist countries, such as Poland or Romania, with the same low percentage of 9%. In France, on the other hand, 33% of citizens were willing to share their facial image with authorities for identification purposes.

The released data does not provide enough details about what exactly was the scope of the survey or what was the meaning of the terms “sharing their facial image with public authorities for identification purposes”. Does “identification” refer to the usual meaning of this term in the facial recognition context, in other terms the use of facial recognition techniques (FRTs) in order to compare a single face against a data set (for instance, to compare a video-still of an assailant captured during a robbery to a dataset of known faces)? Could the ”sharing with public authorities for identification purposes” thus mean the introduction of the biometric data of the respondents in the relevant databases?

A lot of questions remain and it would certainly be necessary to wait for the publication of the whole survey before reading too much into this data.

However, the early findings of the Fundamental Rights Survey released by FRA seem to contradict the affirmation by some law enforcement people or politicians that “people are in favor of facial recognition”. For instance, they do not seem to confirm the declaration of the Mayor of the city of Nice (France), C. Estrosi, that “today everyone is asking for [facial recognition], we are behind, we have to catch up”.

As Joanna Goodey said during the panel, public perception of the use of facial recognition depends on the context: if people are asked about the possible use of FRTs in the fight against terrorism, they tend to agree; but when they are asked about their own biometric data being used, they tend to disagree.

The results of the survey will definitely feed into policy discussions concerning fundamental rights, and will inform EU and national policy makers when making decisions about future laws or rules on facial recognition.

Thanks to the research fellows of AI-Regulation.com for their help in drafting this piece

These statements are attributable only to the author, and their publication here does not necessarily reflect the view of the other members of the AI-Regulation Chair or any partner organizations.

This work has been partially supported by MIAI @ Grenoble Alpes, (ANR-19-P3IA-0003)