Public authorities encounter considerable difficulties procuring AI systems, particularly with the complex EU AI Act and insufficient implementation guidance. Challenges include definitional ambiguities, a lack of specific procurement frameworks, and expertise gaps hindering effective evaluation of AI technologies. The need for clearer national-level guidance is paramount to navigate these complexities and ensure a compliant AI procurement that serves the public interest.

Issues for public authorities buying Artificial Intelligence (AI) systems incorporating General- Purpose AI (GPAI) models arise in the context of the draft EU code of practice, which proposes making risk measures related to fundamental rights and democracy voluntary. This is viewed by EU lawmakers as a contradiction to the AI Act’s intention that such systemic risks be mandatory, potentially compromising the trustworthiness and compliance of procured systems in these critical areas. Coupled with the lack of clarity in AI Act implementation guidelines due to insufficient consultation with EU Member States, public authorities may face considerable challenges in ensuring the procured AI systems fully meet the Act’s requirements for compliance and transparency.

These issues underscore the critical importance of thorough due diligence by public authorities throughout the procurement process. The possibility of the next German government asking for a revision of the EU AI Act or pushing for a national implementation that is “innovation friendly” could impact the compliance obligations for AI systems and require public authorities to adapt their procurement strategies. The AI Act itself, a large and technically detailed piece of legislation, presents a structural, linguistic and relational complexity that can be difficult for procurement teams to fully comprehend and consistently interpret. This is particularly challenging given the frequent imbalance of expertise between public authorities, often under-resourced, and private AI companies during procurement negotiations.

Section I will provide a detailed examination of the AI Act’s deployment provisions and their direct impact on public authorities involved in acquiring AI systems, highlighting the obligations and considerations that must be integrated into the procurement lifecycle, including the crucial aspect of verifying compliance and conducting Fundamental Rights Impact Assessments (FRIAs). Section II will then explore the key challenges confronting public authorities in this domain, such as the absence of a cohesive guidance framework, definitional ambiguities surrounding AI, the asymmetry of expertise between procurers and vendors, and issues related to data quality and technological infrastructure. Subsequently, Section III will present comparative case studies of Germany, Italy, and Spain, offering insights into the diverse experiences and approaches of EU Member States in navigating the complexities of AI public procurement. Finally, Section IV will engage in a broader discussion, synthesizing the preceding analysis to underscore the significant challenges posed by the AI Act’s inherent complexity and the critical need for clearer, practical guidance at both the EU and national levels to ensure effective, ethical, and compliant AI procurement that serves the public interest.

I. AI Act: Impact of Deployment on Public Buyers

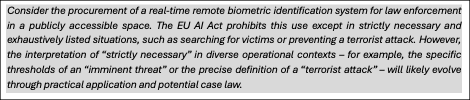

Regulation (EU) 2024/1689, also known as the AI Act, aims to regulate the placing on the market, putting into service and use of AI systems in the EU. Although it does not contain a specific section on public procurement, several of its provisions directly affect public sector buyers of AI systems.[1]

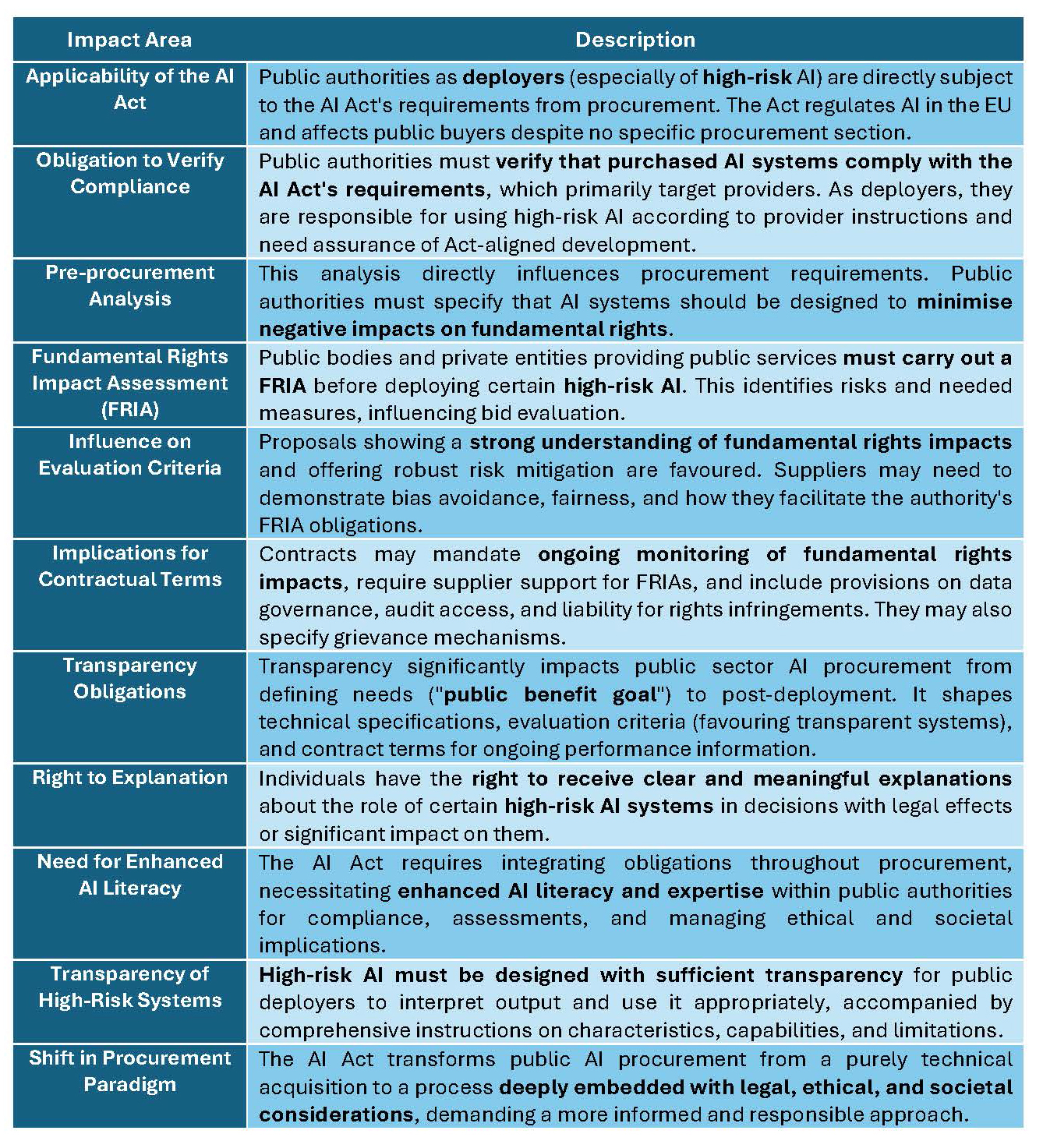

The deployment provisions of the AI Act have a significant impact on public authorities that are in the process of buying AI systems, imposing a range of obligations and considerations that must be integrated into the procurement lifecycle and beyond (Table 1). Public authorities acting as deployers of AI systems, particularly those classified as high-risk,[2] are directly subject to the requirements of the AI Act, necessitating a proactive approach to ensure compliance from the moment of procurement.[3]

A fundamental aspect of this impact is the obligation for public authorities to verify that the AI systems they intend to purchase comply with the AI Act’s requirements that primarily target providers.[4] As deployers, public authorities are responsible for using high-risk AI systems in accordance with the provider’s instructions.[5] To do this effectively, they need assurance that the system has been developed and assessed according to the standards set out in the AI Act, even if the initial legal burden for meeting those standards falls on the provider.

This pre-procurement analysis has a direct influence on the definition of requirements and technical specifications in the procurement documentation. Public authorities will need to specify that the AI system should be designed and function in a way that minimizes potential negative impacts on fundamental rights. Before deploying certain high-risk AI systems, public authorities that are bodies governed by public law or private entities providing public services must indeed carry out a Fundamental Rights Impact Assessment (FRIA).[6] This assessment must identify specific risks to individuals or groups and the measures to be taken if these risks materialize.[7] For example, if procuring an AI system for facial recognition, the authority, informed by the FRIA considerations, might include requirements for accuracy across different demographic groups and safeguards against discriminatory outcomes. The FRIA thus influences the evaluation criteria used to assess bids from potential suppliers.

Public authorities are likely to give greater weight to proposals that demonstrate a strong understanding of the impact on fundamental rights and offer robust risk mitigation mechanisms. Suppliers may be asked to demonstrate how their AI system has been designed and tested to avoid bias and ensure fairness, and how it will facilitate the authority’s ability to meet its FRIA obligations. The ability to provide comprehensive documentation and transparency on the operation of the system will be crucial to enable the Authority to carry out a thorough impact assessment. The FRIA also has implications for the contractual termsagreed with the supplier.

Public authorities may include clauses that mandate ongoing monitoring of the AI system’s impact on fundamental rights and require the supplier to provide support for conducting and updating the FRIA. There might be provisions related to data governance, access to source code for auditing purposes (where appropriate), and liabilities in case the AI system infringes on fundamental rights. The contract may also specify the need for mechanisms to address grievances and provide remedies to individuals affected by the AI system’s decisions. It should not be forgotten that the AI Act gives individuals the right to receive clear and meaningful explanations about the role of certain high-risk AI systems in decision-making processes, particularly where a decision based on the system’s output has legal effects or significantly affects those individuals.[8]

Transparency obligations for public sector bodies deploying AI systems have a significant impact on the approach public sector bodies take to AI procurement. These obligations permeate different stages of the procurement process, from defining requirements to post-deployment management. This initial requirement influences the pre-procurement phase by forcing agencies to have a well-defined understanding of their needs and how AI is expected to meet them. They need to articulate the “public benefit goal” that the AI system is expected to achieve, which provides an anchor for the entire procurement process. The need for transparency also shapes the definition of technical specifications in procurement documents. Governments need to set clear requirements for how the AI system will work, including how it will operate and what data it will process. Transparency obligations may also affect the evaluation criteria used to assess bids from potential providers. Public authorities will likely favor providers who demonstrate a commitment to transparency in their AI systems. Contract terms are also influenced by transparency requirements. Public authorities could include clauses requiring providers to provide ongoing information about the AI system’s performance, updates and any significant changes to its algorithms or data processing.

Table 1. Impacts of EU AI Act on Public Authorities Buying AI systems

Consequently, the AI Act necessitates a fundamental shift in how public authorities approach the procurement of AI systems, requiring the integration of these various obligations and considerations throughout the entire process, from defining requirements and evaluating bids to the final deployment and ongoing monitoring of the systems. The legislation implicitly highlights the growing need for enhanced AI literacy and expertise within public authorities to ensure they can effectively navigate the complexities of compliance, conduct necessary assessments, and manage the ethical and societal implications of AI deployment. Furthermore, the AI Act stipulates that high-risk AI systems should be designed with sufficient transparency to enable public authority deployers to interpret their output and use them appropriately and should be accompanied by comprehensive instructions for use detailing their characteristics, capabilities, and limitations. In essence, the AI Act transforms the procurement of AI by public authorities from a purely technical acquisition to a process deeply embedded with legal, ethical, and societal considerations, demanding a more informed and responsible approach.

II. Key Challenges in Public Sector AI Procurement

There is a significant and growing interest in the use of AI systems within both the private and public sectors. The motivations behind this interest include the hope of increasing efficiency and speed in decision-making, saving costs, and achieving better overall results.[9]

Public procurement of AI systems faces a number of complex challenges that hinder the ability of public organizations to effectively acquire and deploy these technologies in a way that serves the public interest. For example, public purchasers face difficulties in choosing the right type of procurement procedure to suit the unique characteristics of AI procurement, with negotiated procedures and innovation partnerships potentially valuable but requiring careful consideration.[10]

A fundamental obstacle is the absence of a cohesive, unambiguous, and actionable framework of guidance specifically designed for the procurement of AI within the public sector. The existing landscape of regulations and guidelines is often fragmented, employing a variety of terms to refer to AI and related concepts without clear and consistent definitions. This lack of terminological clarity, coupled with a dearth of practical advice on how to implement overarching principles such as value for money, social value, impact assessments, and transparency, places a significant burden on procurement teams who must navigate these complexities and interpret guidance documents often without sufficient expertise.

EU member states recently vented their frustration at the European Commission for not having been properly consulted on key administrative documents for the implementation of the AI law, specifically mentioning the guidelines on the definition of AI systems and prohibited use cases. This lack of consultation, according to one national official, resulted in a ‘lack of clarity in the text, especially in the guidelines on prohibitions’. This directly illustrates the complexity resulting from the lack of a well-defined and jointly developed framework. The frustration expressed by Member States highlights that without a clear and agreed understanding of what constitutes an ‘AI system’ and what use cases are “prohibited”, there is a risk of inconsistent interpretation and application of the AI law across Member States.[11] This lack of common understanding, resulting from the absence of a truly coherent and unambiguous framework, may lead to fragmentation rather than a unified approach to AI regulation in the EU.

The two proposals from the Procurement of AI Community for standard contractual clauses for the procurement of (non-)high risk AI by public organizations documents, published in 2023,[12] offered several important contributions to the development of clearer and more consistent guidelines for AI procurement in the public sector. The updated version further enhanced this contribution by providing a full version aligned with the adopted EU AI Act for high-risk AI and a customizable light version for non-high-risk AI. Crucially, the commentary now available clarifies how the clauses can be used in practice, directly addressing a key challenge in the implementation of any guidelines.

However, despite these significant contributions, difficulties remain in achieving generally clear and consistent guidance, as highlighted in our previous exchanges. The application of these clauses, even the updated versions with commentary, still requires a degree of case-by-case assessment by public organizations to ensure proportionality and appropriateness to their specific needs. This inherent flexibility, while beneficial for tailoring procurement, may lead to variations in application across different organizations. The clauses are not a complete procurement framework and need to be accompanied by broader agreements covering issues such as intellectual property, payment and liability, requiring public sector organizations to ensure consistency across all elements of the contract.

While the high-risk clauses are now aligned with the EU AI Act, the interpretation and practical application of the Act itself in different AI procurement contexts will continue to evolve and may require ongoing updates and clarifications to the model clauses.

The distinction between high-risk and non-high-risk AI, while legally defined, may still require careful interpretation in specific procurement scenarios, potentially leading to some variations in application.[13]

The reliance on the EU AI Act’s definition of ‘AI system’, which is a broad and evolving concept, introduces a degree of inherent flexibility and potential for different interpretations.

Adding to this complexity is the difficulty for procurers to accurately assess and categorize the diverse range of AI technologies available, hindering their ability to identify and apply the most relevant guidance to their specific procurement needs. This definitional uncertainty is compounded by a significant asymmetry in knowledge and expertise between public sector procurement teams and the private sector vendors supplying AI solutions.

Public organizations, particularly local government bodies, often lack the in-house technical understanding and resources to critically evaluate the claims made by AI vendors, conduct thorough due diligence on the technology’s suitability and potential impacts, or fully comprehend the associated ethical implications. This reliance on vendor expertise can create a power imbalance that may disadvantage the public sector.

The effective deployment of AI in the public sector is also significantly constrained by issues related to data quality and the underlying technological infrastructure. Many public organizations struggle with fragmented, poorly curated, and insufficiently integrated data systems, which can negatively impact the reliability and fairness of AI outputs.

Moreover, inadequate infrastructure may not be equipped to support the demands of new AI technologies, leading to challenges in implementation and maintenance. These data and infrastructure limitations can also hinder the ability of procurers to adequately address their statutory obligations concerning data protection and equality. A core difficulty in the public procurement of AI therefore lies in the inherent technological uncertainty surrounding how these systems function in practice and the challenges in evaluating their real-world impacts, including potential societal consequences.

These interconnected challenges underscore the critical need for a more coherent, collaborative, and adequately resourced approach to the public procurement of AI systems to ensure they are indeed effective, ethical, and genuinely serve the diverse needs of the public.

III. National Perspectives on AI Procurement: Case Studies of Germany, Italy, and Spain

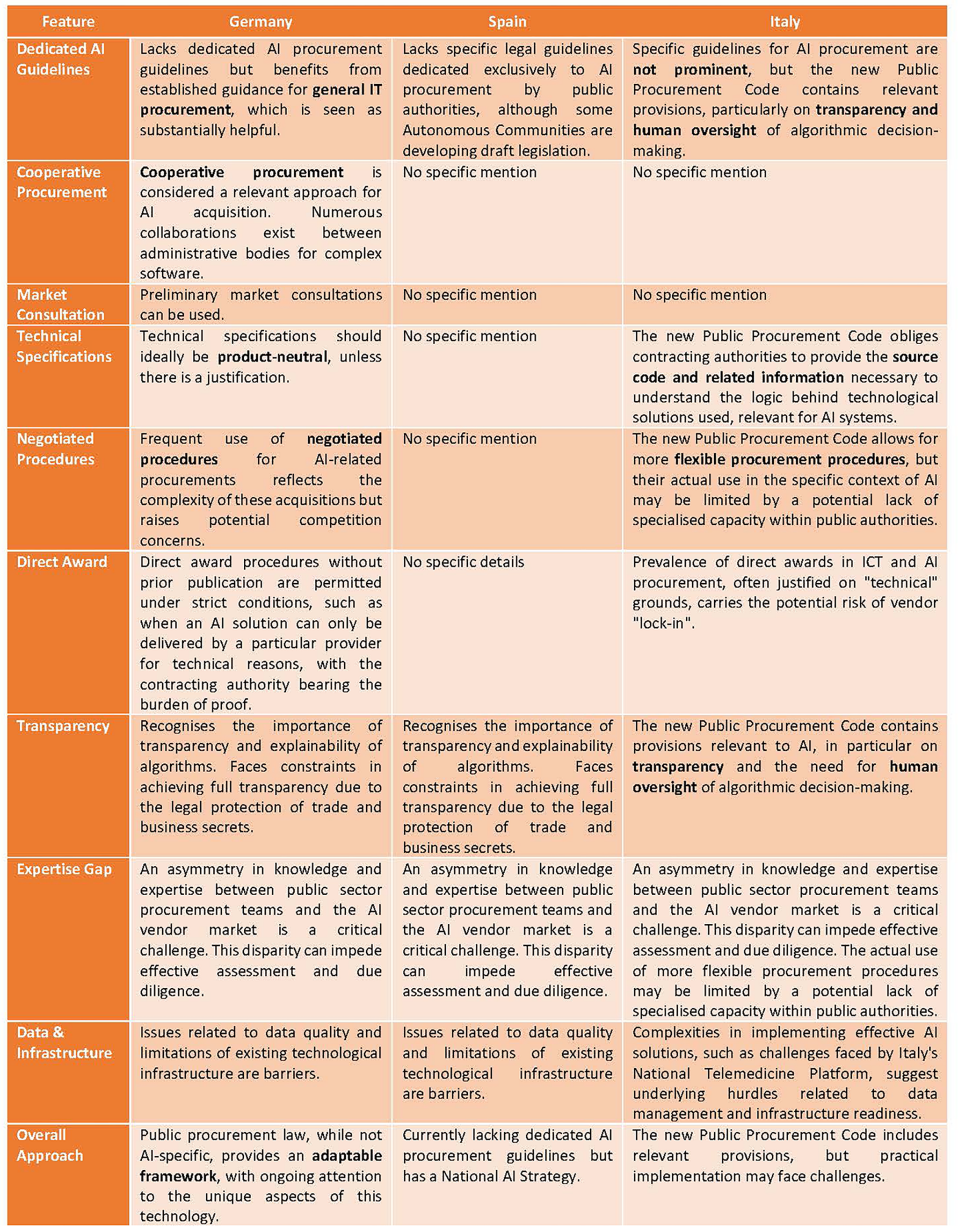

A comprehensive cross-comparative assessment of the challenges of public procurement of AI systems in Germany, Spain and Italy reveals several common and distinct experiences. A key overarching theme is the complexity of procuring and deploying AI technologies effectively and ethically in the public sector. This complexity is exacerbated by the difficulty of selecting appropriate procurement processes to suit the unique characteristics of AI, with negotiated procedures and innovation partnerships identified as potentially valuable but requiring careful consideration in all three countries (Annex I).

The legal framework for AI procurement in Spain is currently evolving, characterized by strategic initiatives and the application of general public procurement law with new considerations for the specificities of AI.[14]While Spain adopted a National Artificial Intelligence Strategy (ENIA) in December 2020, which sets out a vision for the promotion of AI in line with European strategies and Spain’s Digital Agenda 2025, specific legal guidelines dedicated exclusively to AI procurement by public authorities are still being developed. Surprisingly, the National Public Procurement Strategy 2023-2026 makes minimal reference to the acquisition of advanced technologies, focusing instead on their potential to support SME participation. Some Autonomous Communities, such as Galicia, are moving forward with draft legislation specifically aimed at regulating the design, acquisition, implementation and use of AI systems within their administrations.[15]

Germany, whilst also lacking dedicated AI procurement guidelines, benefits from established guidance for IT procurement in general. Germany views this existing IT guidance as substantially helpful in the AI procurement context. Cooperative procurement is considered a relevant approach for AI acquisition in Germany,[16] given the numerous collaborations between administrative bodies for complex software. The involvement of private parties in AI procurement is subject to general procurement principles. Preliminary market consultations can be used, and technical specifications should ideally be product-neutral, unless there is a justification.[17] A frequent use of negotiated procedures for AI-related procurements in Germany reflects the complexity of these acquisitions but also raising potential concerns around competition. Direct award procedures without prior publication are permitted under strict conditions,[18]such as when an AI solution can only be delivered by a particular provider for technical reasons, with the contracting authority bearing the burden of proof. While case law has tightened the requirements for justifying direct awards based on technical necessity, it’s acknowledged that the specific need for customized, high-quality, and controllable AI solutions can sometimes justify purchasing from a particular provider without prior publication.[19] German public procurement law, while not AI-specific, provides a framework adaptable to the procurement of AI systems, with ongoing attention to the unique aspects of this technology.

The situation in Italy shows that while specific guidelines for AI procurement are not prominent, the new Public Procurement Code contains provisions relevant to AI, in particular on transparency and the need for human oversight of algorithmic decision-making.[20] The new Code has introduced innovative provisions relevant to AI procurement. Article 30(1) allows for the use of AI tools and distributed ledger technologies to improve the efficiency of procurement processes. The Code sets strict standards for automated and AI-based decisions in procurement, including the principles of transparency and accountability, the right of economic operators to be informed and receive explanations, the inclusion of human oversight with the ability to override decisions, and measures to prevent algorithmic bias. Contracting authorities are also obliged to provide the source code and related information necessary to understand the logic behind the technological solutions used, which is particularly relevant for AI systems. While the new Public Procurement Code in Italy allows for more flexible procurement procedures, their actual use in the specific context of AI may be limited by a potential lack of specialized capacity within public authorities. Direct award procedures without prior publication are permitted under Article 32(2)(b) of the EU Directive, as implemented in Article 76 of the new Italian Code, in specific circumstances such as the absence of competition for technical reasons, the need to protect exclusive rights (including intellectual property), or to avoid incompatibility or disproportionate technical difficulties. However, these require adequate justification. The prevalence of direct awards in Italy’s ICT and AI procurement, often justified on “technical” grounds, carries the potential risk of vendor “lock-in” scenarios.

Across all three case studies, an asymmetry in knowledge and expertise between public sector procurement teams and the AI vendor market emerges as a critical challenge. This disparity can impede the ability of public organizations to effectively assess vendor claims, conduct thorough due diligence, and fully comprehend the ethical and societal implications associated with AI deployment. While not extensively detailed in each country’s specific section, this underlying issue likely contributes to the cautious adoption and potential implementation challenges observed.

Issues related to data quality and the limitations of existing technological infrastructure are also barriers in Germany, Spain and Italy. The complexities involved in implementing effective AI solutions, such as the challenges faced by Italy’s National Telemedicine Platform, suggest underlying hurdles related to data management and infrastructure readiness. All three countries recognize the importance of transparency and explainability of algorithms, reflecting a wider concern. Germany and Spain face constraints in achieving full transparency due to the legal protection of trade and business secrets. Italy has introduced a legal framework to promote transparency in algorithmic decision-making, but practical implementation and consistent access to this information remain ongoing challenges.

Overall, Germany, Spain and Italy are actively exploring the integration of AI into their public administrations and adapting their procurement practices accordingly. However, they face common fundamental challenges in establishing clear, AI-specific guidelines, overcoming definitional ambiguities, addressing expertise gaps, ensuring robust data governance, and effectively assessing the multiple impacts of AI. Each country is at a different stage in this evolution, with different approaches to balancing innovation with the principles of transparency, competition and ethical considerations in the public procurement of AI systems.

The overarching need is for a more coherent, collaborative and adequately supported strategy at the European level to ensure the responsible and effective deployment of AI in the public sector.

IV. The Imperative for Clear Guidance in Public Procurement

The complexity of the AI Act poses significant challenges for national public authorities engaged in AI procurement. The AI Act is a large piece of legislation with technical language, a hierarchical structure and numerous internal and external cross-references, resulting in structural, linguistic and relational complexity.[21] This inherent complexity can make it difficult for procurement teams within public authorities to fully understand and consistently interpret its provisions. Local government already faces a significant burden in navigating and interpreting existing diverse guidance and legislation related to AI and data-driven systems. The added layer of a complex AI Act could exacerbate this challenge, demanding significant expertise in both AI technologies and the intricacies of the new regulation. This is particularly pertinent given the often-noted imbalance of expertise between under-resourced local authorities and private AI companies during procurement negotiations.

In addition, the AI Act’s detailed provisions on various aspects of AI, such as prohibited practices, high-risk systems, and obligations on providers and users, require careful consideration during the procurement process to ensure compliance. Governments, especially those with limited resources and expertise, may struggle to translate these complex legal requirements into effective technical specifications, selection criteria and contract terms. Procurement teams need clearer support to procure AI effectively and ethically, and the complexity of the AI Act without accompanying clear and practical guidance risks hindering this goal.

The lack of consensus on the definition of ‘AI’ and other key terms related to societal benefit within existing procurement guidance should be also pointed out. The AI Act provides its own definition of an AI system, and while this aims for harmonization, the potential for discrepancies and the need to align procurement practices with this new definition could create initial confusion and tension for public authorities.

On the other hand, the EU’s drive for regulatory simplification could potentially alleviate some of these tensions if applied thoughtfully to the AI Act. Simplification efforts could lead to greater clarity in the regulatory requirements, making it easier for national authorities to understand and implement the Act’s provisions consistently. Simplified guidelines or interpretations of the AI Act’s technical requirements could assist public authorities in establishing their conformity assessment procedures.

However, poorly conceived simplification efforts could lead to ambiguities or omissions that make consistent interpretation and enforcement across Member States more challenging. It is crucial that simplification does not undermine the regulatory foundation or weaken the essential protections enshrined in the AI Act. Any future simplification must carefully balance the desire to reduce burdens with the need to maintain effective regulation of a rapidly evolving technology to protect citizens and ensure a level playing field.

In the context of AI procurement, simplification efforts that do not provide sufficient clarity on how to translate the principles of the AI Act into practical procurement processes could be counterproductive. Governments need evidence-based best practice guidance and policies that clarify key terminology and support the development of skills in AI procurement. If simplification results in a lack of specific guidance on how to incorporate the AI Act’s requirements for high-risk systems (e.g. regarding data quality, documentation, transparency, human oversight) into procurement documents, it could leave public authorities struggling to ensure they are procuring AI systems that are compliant, safe and ethical. Whilst the AI Act stipulates requirements for AI systems, it is improbable that it will have a direct impact on the regulation of procurement itself. This lends further credence to the notion that public authorities will require clear national-level guidance on the integration of the AI Act’s principles into their procurement legal frameworks and practices. Poorly executed simplification at the EU level may not adequately address this need, potentially creating further tension.

Conclusion

The intricacies inherent in the AI Act present significant challenges for national public authorities in the effective procurement of AI systems that comply with its stipulations and uphold ethical principles. Whilst simplification efforts hold the potential to reduce these challenges through greater clarity, they must be managed with caution to avoid undermining the Act’s fundamental objectives and creating further ambiguities that could impede effective and consistent AI procurement across the EU.

Addressing these multifaceted issues necessitates a coherent and collaborative strategy at the European level. This strategy would need to provide clear, unambiguous, and actionable guidance specifically tailored for the procurement of AI within the public sector, a framework currently lacking. Such a strategy could encompass the development of clear definitions for ‘AI systems’ and other key terms to ensure consistent interpretation and application of the AI Act across Member States.

The facilitation of the development of evidence-based best practice guidance and policies at the national level may also facilitate public authorities in translating the AI Act’s principles into practical procurement processes, including the specification of technical requirements and the integration of obligations for high-risk systems. It is imperative to establish effective mechanisms for consultation and collaboration between the European Commission and EU Member States to facilitate the development of implementation guidelines and any potential future amendments to the AI regulatory framework.

In conclusion, the development of a comprehensive EU strategy is imperative to ensure the responsible and effective deployment of AI in the public sector, safeguarding fundamental rights and promoting public interest while navigating the inherent complexities of AI technology and its regulation.

Annex I. Cross-Comparison of Germany, Italy, and Spain

These statements are attributable only to the author(s), and their publication here does not necessarily reflect the view of the other members of the AI-Regulation Chair or any partner organizations.

This work has been partially supported by MIAI @ Grenoble Alpes, (ANR-19-P3IA-0003) and by the Interdisciplinary Project on Privacy (IPoP) of the Cybersecurity PEPR (ANR 22-PECY-0002 IPOP).

[1] The direct impact of the AI Act on the public procurement of AI models is less direct, as the Regulation is primarily aimed at the providers of these models, with obligations relating to technical documentation, transparency, copyright compliance and, for systemic risk models, risk assessment and mitigation (see Chapter V of the AI Act).

[2] Article 6 and Annex III of the AIA

[3] Many AI systems used in the public sector, such as those related to access to public services and benefits, law enforcement, migration, asylum, border control, administration of justice and democratic processes, are classified as high risk.

[4] Such as those related to the system’s design, the governance of training data, the provision of comprehensive technical documentation, the transparency of the system’s operation, the facilitation of human oversight, and the assurance of robustness, accuracy, and cybersecurity

[5] Article 26 of the AIA

[6] As listed in Article 27, including many used by public authorities to assess individuals

[7] Article 27§1 of the AIA

[8] On November 25, 2024, Bulgaria’s Sofia District Court made a request for a preliminary ruling to the CJEU relating to the provisions on automated decision-making (“ADM”) under the AI Act. Case C-806/24 relates to a claim made by a telecoms company against a consumer who did not pay his bills. The consumer argues that the telecom company’s method of automatically calculating fees constitutes an ADM system subject to Article 86(1) of the AI Act, and raised questions about the transparency, human review, and fairness aspects of the ADM system.

[9] Aleph Alpha, a German AI start-up, has collaborated with the regional government of Baden-Württemberg to develop the AI-based text assistant “F13” via the “InnoLab_bw” platform. Aleph Alpha also recently concluded a framework agreement with the Bavarian state government for the joint development of administrative AI systems. These instances illustrate the growing trend of government entities working with AI developers and integrating AI into their operations.

[10] Preparing clear and comprehensive tender specifications is another significant hurdle, particularly in defining the subject matter of the AI service and addressing the complexities of data ownership and intellectual property rights. Ensuring that tenderers/applicants are competent and efficient organizations is crucial when dealing with government data, but established standards and certifications for AI in public procurement are currently limited. The increasing importance of intellectual property rights, particularly in relation to potential copyright infringements in the AI sector, could also introduce new grounds for exclusion in public procurement.

[11] In particular, Germany pointed to the Commission’s failure to consult properly before issuing these crucial guidelines.

[12] Procurement of AI Community, Proposal for standard contractual clauses for the procurement of Artificial Intelligence (AI) by public organizations Version September 2023 (draft) – Non High Risk version; Procurement of AI Community, Proposal for standard contractual clauses for the procurement of Artificial Intelligence (AI) by public organizations Version September 2023 (draft) – High Risk version;

[13] Similar AI systems for education, like those analyzing student performance, could be classified as either high-risk (evaluating learning outcomes) or non-high risk (providing suggestions with human oversight). This distinction, based on the intended purpose and level of human intervention, will dictate whether the “full” or “light” version of the contractual clauses is used in procurement. Therefore, careful interpretation of the EU AI Act is crucial, leading to potential variations in application.

[14] Krönke, C., & Fernández, P. V. (Eds.). (2025). Buying AI. Cheltenham, UK: Edward Elgar Publishing.

[15] European Committee of the Regions: Commission for Economic Policy, Fondazione FORMIT, Trilateral Research Limited, Fontana, S., Errico, B., Tedesco, S., Bisogni, , Renwick, R., Akagi, M., & Santiago, N. (2024). AI and GenAI adoption by local and regional administrations, European Committee of the Regions.

[16] Scholl, M. Christa (2020). Building Competence: Expectations, Experience, and Evaluation of E-Government as a Topic in Administration Program at the TH Wildau – A Case Study. 3 Systemics, Cybernetics and Informatics 18.

[17] Section 121 of the German Act against Restraints of Competition (“Gesetz Gegen Wettbewerbsbeschränkungen” – GWB) and Sections 31 to 34 of the Procurement Regulation (“Vergabeverordnung” – VgV)

[18] Article 32(2) PPD lists three situations in which the use of negotiated procedure without prior publication is allowed for public works contracts, public supply contracts, and public service contracts. According to lit. b, direct awards are justified where the works, supplies, or services can be supplied only by a particular economic operator.

[19] OLG Düsseldorf, Order, 12 July 2018, VII-Verg 13/17 OLG.

[20] Roberto Cavallo Perin, Marco Lipari, and Gabriella M. Racca (eds), Contratti pubblici e innovazioni per l’attuazione della legge delega (Jovene 2022).

[21] See upcoming article from the Chair AI-Regulation.